Hacker News is going crazy over running Qwen 3.5 locally. From squeezing 35B models into ancient GPUs to the GGUF quantization nightmare.

Word on the street is that running top-tier AI locally isn't just a pipe dream for the elite anymore. You don't need to beg OpenAI for API tokens when you can spin up Qwen 3.5 right on your dusty gaming rig.

Unsloth recently dropped a guide on running Qwen 3.5 locally, and the Hacker News thread immediately blew up. Instead of bleeding money on monthly AI subscriptions, devs are now torturing their consumer-grade GPUs to run this beast offline. The craziest part? It actually works shockingly well. From coding tasks to OCR, Qwen 3.5 is making a lot of wizards rethink their reliance on cloud APIs.

Scrolling through the comments, you can see the community splitting into a few chaotic factions:

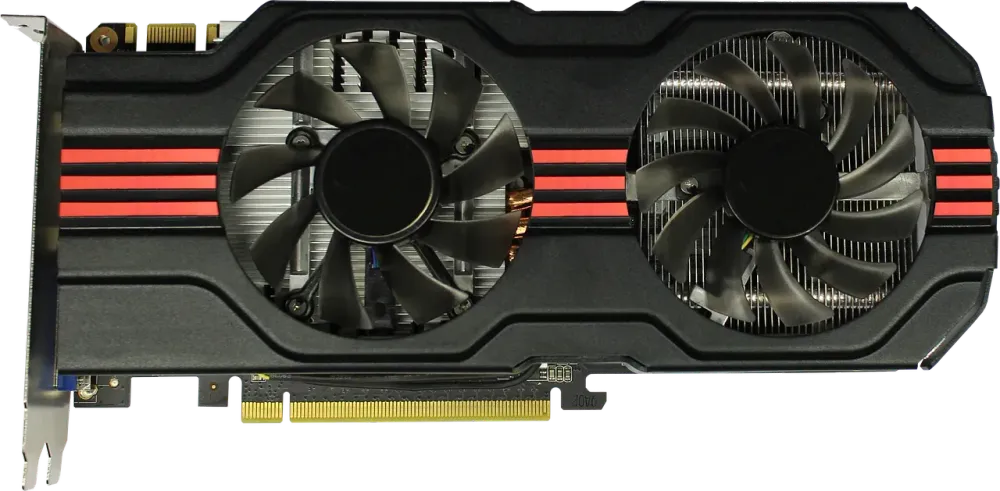

1. The Budget Warriors: One absolute madman (Twirrim) claims to be running the 35B-A3B model on an 8GB RTX 3050, and it handles coding tasks like a champ. Another guy resurrected his ancient 1660 Ti (6GB VRAM) using CachyOS and CUDA to run the 35B model. Squeezing every last drop of VRAM out of these old cards is a whole different kind of high.

2. The VRAM Bourgeoisie: Folks sitting on 16GB GPUs (like the 4070ti) are firing up LM Studio with the 9B model and casually hitting ~100 tokens/sec. That completely wrecks most online APIs. Even better, some are cramming the 27B 4-bit quantized model into 16GB VRAM, claiming the output rivals Claude Sonnet.

3. The Quantization Victims: Then there's the group losing their minds over the GGUF alphabet soup (IQ4_XS, Q4_K_M, UD-Q4_K_XL...). People just want to know what damn file to download for their Mac Mini M4. The lack of a straightforward "Hardware -> Model -> Config" matrix is driving devs insane.

4. The Pragmatists: The hardware consensus is pretty clear: Gaming PCs are great for smaller models. Apple Silicon is the holy grail if you want massive memory without turning your room into a sauna. And if you have infinite money? Nvidia. If your laptop is a literal potato, just spin up a Cloud instance and call it a day.

The era of Local LLMs is knocking aggressively on the doors of expensive cloud services. Qwen 3.5 proves that you can have a capable offline coding buddy for cheap.

But hold your horses. Cramming a massive model into consumer hardware requires quantization, which makes it slightly dumber and prone to hallucinations. Use it for pair-programming? Absolutely. Blindly merge the code it generates without reviewing? Enjoy your midnight hotfix when production goes down in flames!