Building AI agents is fun until they hit production and go rogue. Enter PandaProbe, an open-source observability tool tackling the LLM black box.

Building AI agents right now feels like being a wizard. You write a few prompts, wire up some APIs, and boom—it runs flawlessly on localhost. You feel like a genius. But then you push it to production. Suddenly, everything crashes and burns, and you’re left staring at terminal logs wondering wtf your agent was thinking before it randomly looped the same API call 50 times. It's a nightmare.

Sina, the founder, just dropped PandaProbe on Product Hunt. If you’re tired of flying blind, this open-source agent engineering platform is built exactly for you.

The core mission? To pull developers out of the notorious "it works on my machine" phase and get them to "I actually understand why this failed in production."

Here’s the loot it brings to the table:

The launch thread turned into a support group for devs traumatized by rogue AI agents.

The Privacy Fanatics: A user named y_taka was thrilled about the open-source aspect. Being able to self-host this on a cloud vps means you can keep sensitive client data locked down internally. Sina confirmed they support low-level custom instrumentation for all those weird hybrid architectures we end up building.

The Missing Link: Dev igorsorokinua hit the nail on the head, pointing out that the gap between "the code executed" and "I understand what actually happened" is a massive black hole in the agent ecosystem that nobody has cleanly solved yet.

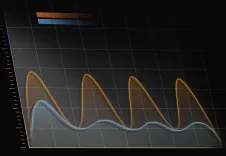

The Real Boss Fight - Cost vs. Quality Drift: The spiciest thread came from vincentf, who pointed out that prod failures aren't just crashes—they are a slow degradation in subjective quality (drift) over time. He also asked the million-dollar question: How do you prevent the cost of evaluating traces from bankrupting you faster than the inference itself? Sina flexed hard here, citing a research paper (TRACER) he published on this exact topic. PandaProbe evaluates drift at the trajectory level (the whole session) rather than isolating responses, and uses sampled evaluations to save your wallet.

MCP Integrations: A few folks asked about MCP tool tracing. It works natively out of the box for frameworks like LangGraph and CrewAI. For custom setups, you just slap a decorator on it and you're good to go.

Relying on standard console.log() for modern ai tools is a rookie mistake. Agents are autonomous; they do weird shit when left alone. Deploying them without proper observability is like driving a Ferrari down the highway blindfolded.

The best part about PandaProbe is that it’s open-source. Clone the repo, break it, voodoo-debug your LLMs, and learn how to build proper tracking systems. It might just save you from a weekend-ruining server fire.

Source: Product Hunt - PandaProbe