You use Docker every day, but did you know it bypassed corporate firewalls using a 90s dial-up tool? Let's dive into the HN drama and Linux dependency hell.

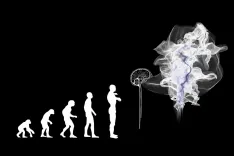

A decade ago, our absolute favorite excuse as developers was: "Well, it works on my machine!" Then Docker rolled up, looked us dead in the eye, and said: "Bet. Just ship your entire damn machine to production then." And just like that, an industry standard was born.

Recently, Hacker News has been buzzing about an academic article titled "A decade of Docker containers." Reading through the comments from the graybeard devs in that thread is a wild ride full of arcane tech lore. Grab a coffee, let's break down what the hell actually happened.

The original CACM article is a long academic read, but here are the two juiciest plot twists for you lazy readers:

First, Docker actually launched in 2013, making it 12 years old. Why call it a decade? Because academic peer review is so painfully slow that by the time the paper was accepted, the authors realized "12 years" killed the vibe. So they rounded it to "A decade" because it sounds way cooler.

Second, the massive SLIRP hack. In its early days, Docker was getting blocked left and right by draconian corporate IT firewalls. The solution? Docker wizards dug up SLIRP—a 1990s dial-up tool originally meant for shell dial-ups and Palm Pilots. Instead of using proper network bridging, they used SLIRP to translate container traffic through host system calls, essentially masquerading as a VPN. It bypassed enterprise security seamlessly. An absolutely unhinged hack that became foundational tech!

Down in the comment section, the community split into factions, and the combat was glorious:

1. The "Ship the Machine" Cynics: User talkvoix ruthlessly pointed out: We took the oldest dev excuse ("it works on my machine") and turned it into standard architecture. redhanuman delivered the fatal blow: 10 years later, we are doing the exact same shit with AI. Data scientists say, "It works in my Jupyter notebook," so we containerize the notebook and call it a "data pipeline." The abstraction always wins because fixing the core problem is too hard.

2. The Linux Haters: One guy straight-up snapped: "Linux user space is an abject disaster of a design... Docker should not need to exist." According to this faction, if running programs and managing dependencies on Linux wasn't such a chaotic dumpster fire, we wouldn't need this heavy containerization layer to begin with.

3. The Nix & Podman Hipsters: Some devs advocated ditching Docker for Nix or Process Compose to avoid the headache of port mapping and volume mounts. But the Ops guys fired back immediately: Docker's value isn't just local dev. Chucking containers onto a cloud vps orchestration engine (like Kubernetes) is where the real magic happens, saving massive hardware overhead compared to VMs.

4. The Dockerfile Despair:

Everyone collectively groaned about copying and pasting janky shell scripts and cargo-culting RUN apt-get upgrade. We all wish for something more declarative than bash commands. Yet, we have to admit: that ugly, dirty flexibility is exactly why Dockerfile remains the undisputed king.

What can we learn from this nostalgic drama?

docker-compose up -d.